Artificial intelligence is now used to predict crime, but is it biased?

In this document, you will understand what might be tomorrow's tool to help reduce criminality on a large scale…

-

The idea of Progress

- The idea behind this project is to make the society safer. But it raises important ethical questions that cannot be ignored.

- A society without crime would be a great progress for global safety and the technology developed to achieve this goal is also a great progress in the way we can use information taken from the population. In other words, it shows what we can do with big data coupled with a specific intention.

- It creates a new tool to be used by the Police but is this going to help everyone especially is this tool is biased?

-

Spaces and Exchanges

- This type of technology will change the way people interact. If an artificial intelligence can determine whether you are about to commit a crime or not, it might deter a lot of people from thinking about crimes.

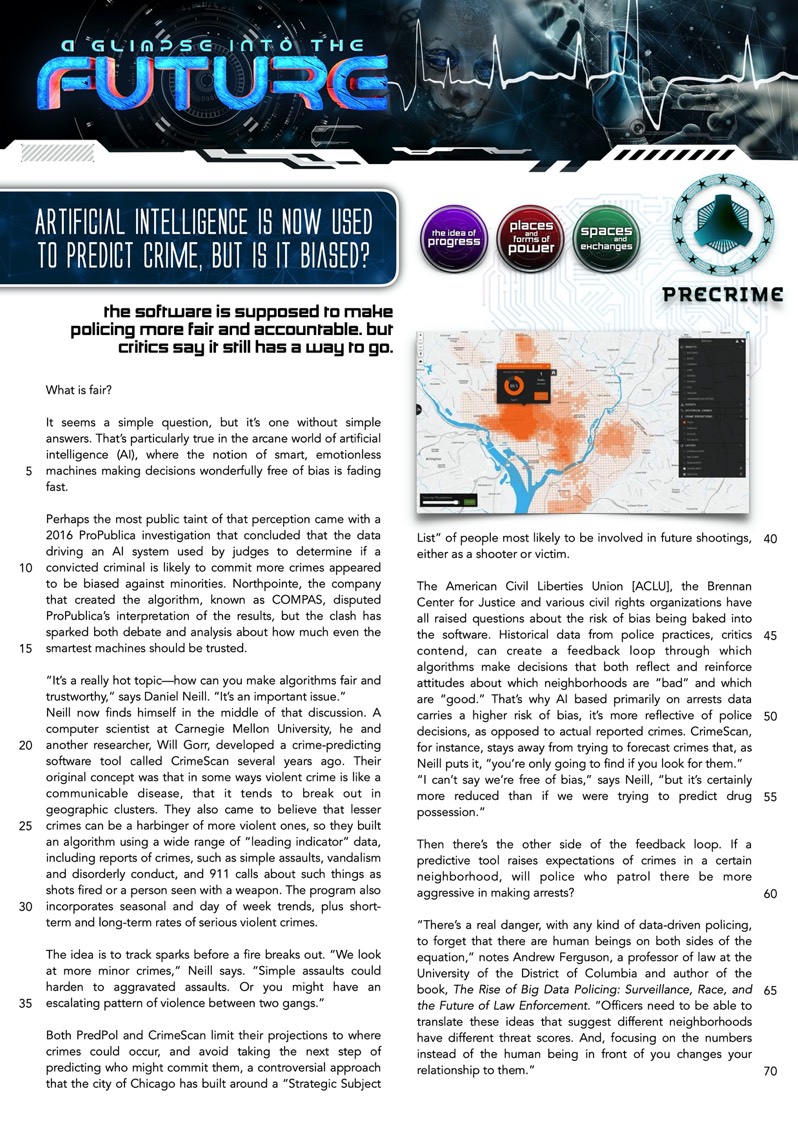

- For the police services, the areas that they have to check will be considered as data and information digested by a computer. The efficience will no longer come from the decisions made by police officers but from an algorithm.

- It will create a certain suspicion around certain areas that the AI will consider as potential threat, eliminating any human factor from the decision. This way, people will only be seen as data, numbers, statistics and no longer as individualities in their complexity. Si in a way, it may dehumanize our environment and potentially the way we interact with our own neighbors.

-

Places and forms of Power

- This type of technology will give a lot of power to the police and to the people who want to control the population. If we can indeed predict crimes, then what's the next step? Should we arrest people just on the condition that a computer suggested that they might commit a crime?

- AI will get more and more power. If AI gets power over human beings such as police or law decisions, then we might fear the next step.

- AI, as designed here, is qualified as a "black box algorithm" since nobody finally understands why a decision was made this way. Then, should we empower a machine to decide for real human beings when we don't know its "motivations"?

- Arcane: ésotérique / obscur

- Bias: préférence / parti pris / penchant

- To fade: diminuer / s'estomper

- To spark: faire des étincelles

- Trustworthy: sérieux / digne de confiance

- Issue: problème

- Cluster: groupe / communauté

- Harbinger: signe annonciateur

- To forecast: prévoir

- Albeit: bien que / quoique

- Seldom: rare / rarement

- People post photos every day on facebook, read articles on their smartphones, and pay by credit card.

copie.png)